I keep a digital garden. It is a space where I think out loud, connect ideas over time, and publish things I have actually worked through. That practice is now caught in the middle of three forces that generative AI has set in motion: it makes writing better, it floods the web with generated content, and it feeds on the very gardens it degrades.

The Upside: Writing with an LLM

The most immediate benefit is collaboration. An LLM can act as a sounding board for brainstorming, push back on weak arguments, and help tighten prose. Jorge Arango described this as the “amanuensis” role1, where the machine handles mechanical work while the human steers the thinking. LLMs can also assist with research by performing search and collating results across sources.

This is the part that works well, and it is the reason I use LLMs in my own writing process. The key is that the human remains the author. The LLM accelerates; it does not originate.

The Flood: Generated Content at Scale

The problem starts when that acceleration is pointed at volume instead of quality. A single person with an LLM can produce more content in a day than they could write in a month. Multiply that across millions of users and you get what some have started calling the “Slopocene”2, a web increasingly dominated by low-effort generated text that is hard to distinguish from human writing.

The downstream effects compound. Search engines surface generated results alongside human ones with no reliable way to tell them apart. False information scales faster than correction. Feedback and discussion on posts becomes shallow when bots participate alongside people. The overall signal-to-noise ratio drops, and with it, the trust that makes digital gardens worth reading in the first place.

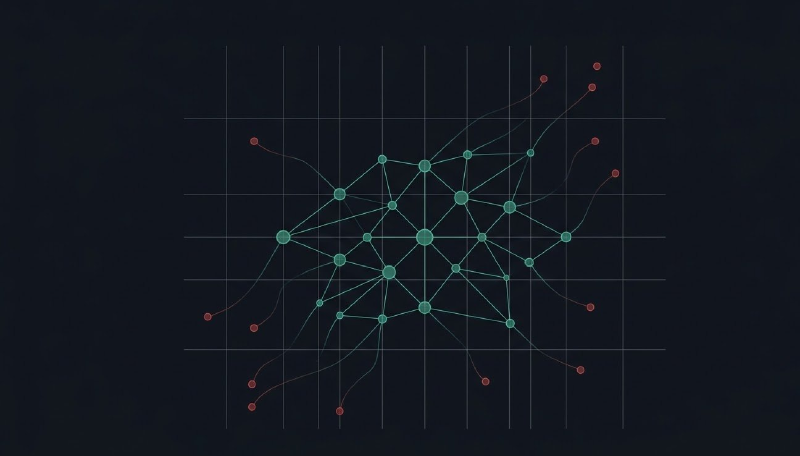

Maggie Appleton has written extensively about this trajectory. She describes the result as a “Dark Forest”3, borrowing from the Fermi Paradox solution where anything that makes itself visible gets consumed. In the digital version, quality public content attracts bot attention, scraping, and imitation, which pushes genuine creators toward smaller, private spaces. Her illustrations of this dynamic are worth seeing in the original article4.

There is a recursive problem here too. When generated content gets harvested and fed back into model training, model performance degrades over successive generations. Researchers call this Model Autophagy Disorder (MAD), and it means the flood is not just a content problem but a training data problem that makes future models worse.

The Harvest: Data as Raw Material

Using the data commons for private profit is not new. Generative AI has intensified the practice because training requires enormous volumes of text, and digital gardens, with their human-curated, publicly accessible content, are prime targets.

Lars Doucet mapped out where this leads5. First, a “Market for Lemons” dynamic takes hold in the digital world: when spam and generated content become indistinguishable from genuine work, the perceived quality of everything drops. Second, a “Great Logging Off” follows as creators of all types retreat from the public internet. Third, the remaining communities wall themselves off into private spaces to prevent bot scraping6.

The effect is something like a bottom trawler that destroys the ecosystem while gathering its catch. Digital gardens could disappear as a public good, not because people stop writing, but because the open web becomes too hostile to sustain them.

The Tensions That Hold

These forces create a set of interlocking conflicts that have no clean resolution yet.

On the data side, training new models requires vast amounts of high-quality human text. That text has to come from somewhere, and the economics incentivize model makers to avoid paying fair market rates for it7. Content creators and model makers end up in direct opposition, and it does not help that the industry’s early data acquisition practices were liberal at best. The same training data is needed to address the risks created by generated content and to keep models current, so the demand is not going away.

On the content side, generation is extraordinarily cheap compared to human authorship. That cost advantage is attractive to many business models, but the resulting abundance displaces human work and erodes authenticity. The generated data cycles back into training corpora, contributing to model collapse and content homogenization. The very cheapness that makes generated content attractive undermines the quality that gave it value.

As of this writing, the conflicts are playing out through lawsuits and regulatory proposals, but nothing has resolved the fundamental tension between the demand for training data and the rights of the people who created it.

What This Means for This Site

I publish here because I want to think in public and have those thoughts be findable by other practitioners. Every tension described above pushes against that goal. The flood makes it harder to be found. The harvest means anything I publish becomes training material. The erosion of trust means even genuine writing gets viewed with suspicion.

I do not have a solution to any of that. What I do have is a practice: write things I have actually worked through, show the reasoning, attribute properly, and publish under my own name on a domain I control. That is a small bet that authenticity still matters, even in a landscape that is actively selecting against it.

Footnotes

-

The amanuensis role is described by Jorge Arango in Three Roles for Robots. ↩︎

-

The term captures the state of a web increasingly flooded with low-quality generated content. It fits better than “Generated Web” because it emphasizes the quality problem, not just the origin. ↩︎

-

Described in Maggie Appleton’s The Expanding Dark Forest and Generative AI. The Dark Forest term originally refers to a solution to the Fermi Paradox where intelligent life that announces itself is soon extinguished. In the digital version, quality public content that attracts attention gets consumed by bots. ↩︎

-

See Appleton’s article and her conversation on The Informed Life for the full visual treatment. ↩︎

-

See Lars Doucet, AI: Markets for Lemons, and the Great Logging Off. ↩︎

-

Cloudflare has created anti-bot scraping measures such as redirecting bots into mazes from which they never escape, partly because many bots ignore the robots.txt convention. ↩︎

-

This feeds the “Data is the New Oil” framing. I am not sold on the analogy as a whole, but the demand side is real. ↩︎